Introduction

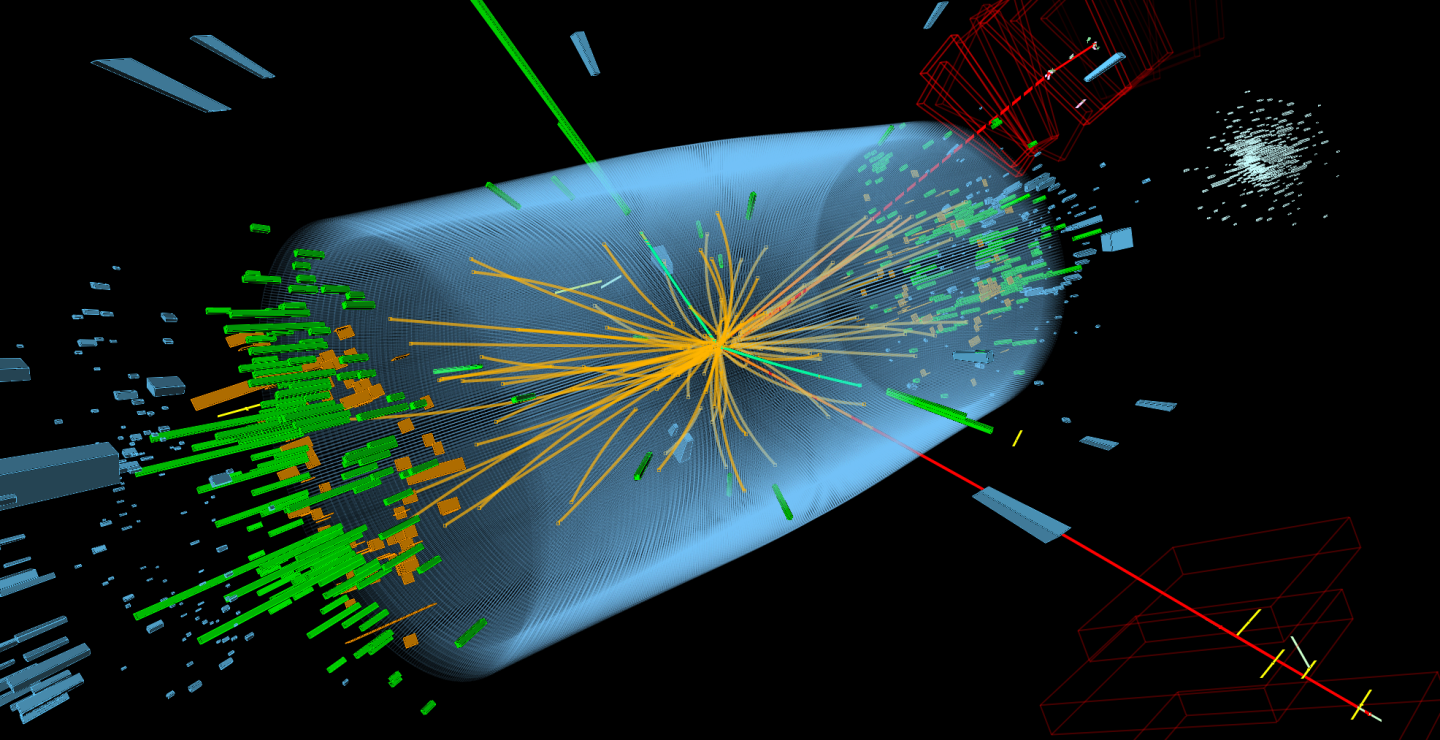

Time tagging of events in a high-energy detector system refers to the process of accurately measuring and recording the timing information associated with the detected events. In particle physics experiments or other high-energy physics experiments, it is crucial to have precise time information to study the behavior of particles and their interactions.

The time tagging process typically involves associating a timestamp with each event detected by the system. This timestamp provides information about when the event occurred relative to a reference time, often synchronized with a master clock. The accuracy of the time tagging depends on the resolution of the timing system and the synchronization methods employed.

Here are some examples of applications of time tagging in high-energy detector systems:

-

Particle Identification: By accurately tagging the arrival times of particles at different detector elements, scientists can study the behavior and properties of different types of particles. The time information can help in distinguishing between particles with different masses and energies.

-

Event Reconstruction: Time tagging is crucial for reconstructing the trajectory and interaction points of particles within a detector system. By precisely measuring the time delays between different detector elements, scientists can reconstruct the path and timing of particles, allowing them to study particle decays, scattering processes, and other interactions.

-

Time-of-Flight Measurements: Time tagging is essential in time-of-flight (TOF) detectors. TOF detectors measure the time taken for particles to travel a known distance within the detector system. Accurate time tagging enables precise determination of particle velocities and energies.

-

Trigger Systems: High-energy experiments often employ trigger systems to select interesting events for further analysis. Time tagging is used in trigger algorithms to determine whether an event meets specific timing criteria, such as a coincidence or a specific time window, to trigger data readout.

-

Background Rejection: In experiments where background events are common, accurate time tagging can be used to differentiate between signal events and background noise. By applying timing cuts and correlations, scientists can reject events that do not align with the expected timing patterns, enhancing the purity of the collected data.

-

Calorimetry: Time tagging is employed in calorimeters to measure the time of energy deposits. This information helps to distinguish between prompt energy depositions from the primary particle and late energy deposits from secondary particles or noise sources.

-

Neutron time-of-flight spectroscopy: it is a technique used to study the energy and dynamics of neutrons by measuring their time-of-flight from a source to a detector. It is a fundamental tool in the field of neutron scattering, providing valuable information about the structure and behavior of materials at the atomic and molecular levels. In neutron time-of-flight spectroscopy, a pulsed neutron source emits a burst of neutrons that travels towards a sample of interest. The neutrons have different energies, and their velocities are determined by their energy and mass. As the neutrons travel through the sample, they interact with the atomic nuclei and experience scattering or absorption events. The time it takes for a neutron to reach the detector after being emitted from the source is recorded, forming the time-of-flight spectrum. The time-of-flight spectrum contains information about the energy distribution of the neutrons that interacted with the sample. Neutrons with higher energies travel faster and have shorter flight times, while neutrons with lower energies move slower and have longer flight times. By analyzing the shape and intensity of the time-of-flight spectrum, scientists can extract valuable insights about the scattering processes and the properties of the sample.

In time tagging applications, a readout in list mode refers to a data acquisition technique where individual events or measurements are recorded as a list of data points, typically consisting of the event timestamp and associated information. This approach is particularly suitable for time tagging applications due to several reasons:

-

Flexibility: List mode readout allows for maximum flexibility in data analysis. Each event’s timestamp and associated information are recorded individually, preserving the full timing information without any imposed constraints or predefined time bins. This flexibility enables researchers to apply various analysis techniques and extract the most relevant information from the data.

-

High Time Resolution: List mode readout provides the highest time resolution achievable by the detector system. It allows for precise measurements of the event arrival times, often at sub-nanosecond or even picosecond scales. This level of time resolution is critical in time tagging applications where precise timing information is essential for accurate event characterization and analysis.

-

Event Correlations: List mode readout allows for the analysis of event correlations by retaining the temporal relationship between individual events. Researchers can investigate correlations in the timing of events, such as coincidences or time intervals between specific events, which can provide valuable insights into the physics processes under study.

-

Offline Processing: With list mode readout, all data is saved, enabling offline processing and analysis. This is advantageous because it allows researchers to revisit the data, apply different analysis techniques, or develop new algorithms at a later stage. It also enables the extraction of additional information or the implementation of more advanced analysis methods as they become available.

-

Event Reconstruction: List mode readout preserves all relevant information for event reconstruction. The individual timestamps and associated data allow for precise reconstruction of event trajectories, interaction points, and other properties of interest. This capability is crucial in high-energy physics experiments where accurate event reconstruction is essential for understanding particle interactions and extracting meaningful physics results.

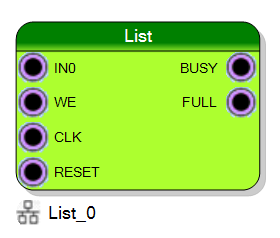

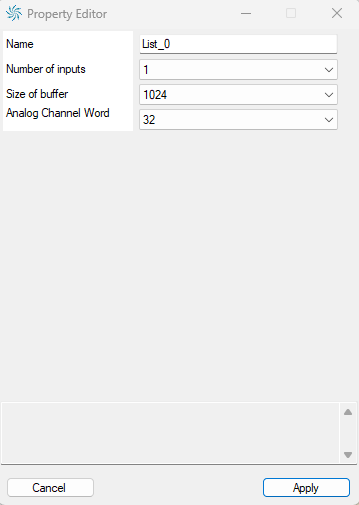

List component

The block represent a list of data that could be buffered in a FIFO and could be downloaded with the specific List Module tool in the Resource Explorer or using the Sci-SDK library. The user can specify during the block creation the data size and the number of data that has to be buffered. The data can be provided with the IN input and can be written in memory giving a HIGH signal in the WR input. The CLK input signal represents the clock signal, while the RESET input signal clears the buffer. The BUSY output signal is HIGH during the data downloading and the FULL output signal is HIGH to indicate that the memory is full of data.

A FIFO (First-In, First-Out) memory, also known as a queue, is a type of memory structure that operates based on the principle of preserving the order in which data elements are stored and retrieved. It follows a First-In, First-Out data access policy, meaning that the data item that enters the memory first will be the first to be read out.

In a FIFO memory, data elements are written or “enqueued” at one end of the memory, called the “write” or “input” end, and they are read or “dequeued” from the other end, known as the “read” or “output” end. The data elements are stored in the memory in the same sequence they were received, forming a queue-like structure.

Realtime data acquisition

In a FIFO memory, it is essential to have the readout operation consistently faster on average than the data write operation to avoid losing events or data. This requirement is crucial to maintain the integrity and completeness of the stored data. Here’s why:

-

Data Overflow Prevention: If the readout operation is slower than the data write operation, the memory may fill up with data faster than it can be read. As a result, when new data arrives and the memory is already full, there is no space to store the incoming events. This leads to data overflow, and any events that cannot be accommodated are lost or overwritten. By having the readout faster, it ensures that the memory is emptied at a rate sufficient to make room for new data, preventing data loss.

-

Event Synchronization: In certain applications, it is essential to synchronize events or data from multiple sources. If the readout operation is slower than the write operation, the timing relationship between events may be distorted or lost. Having a faster readout ensures that events can be read and processed in a timely manner, preserving their temporal relationships and enabling accurate synchronization.

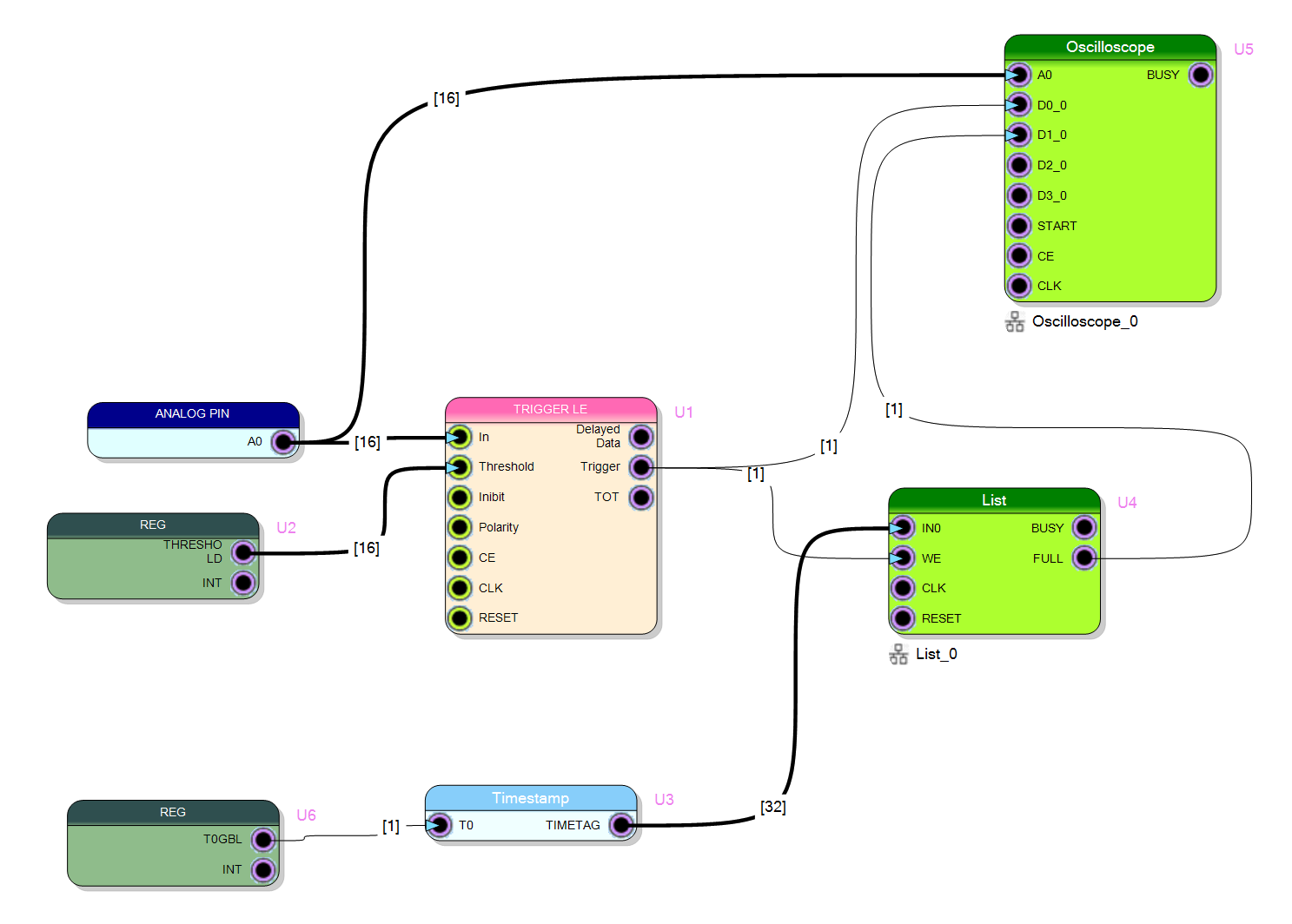

Time Tagging Block Diagram in Sci-Compiler

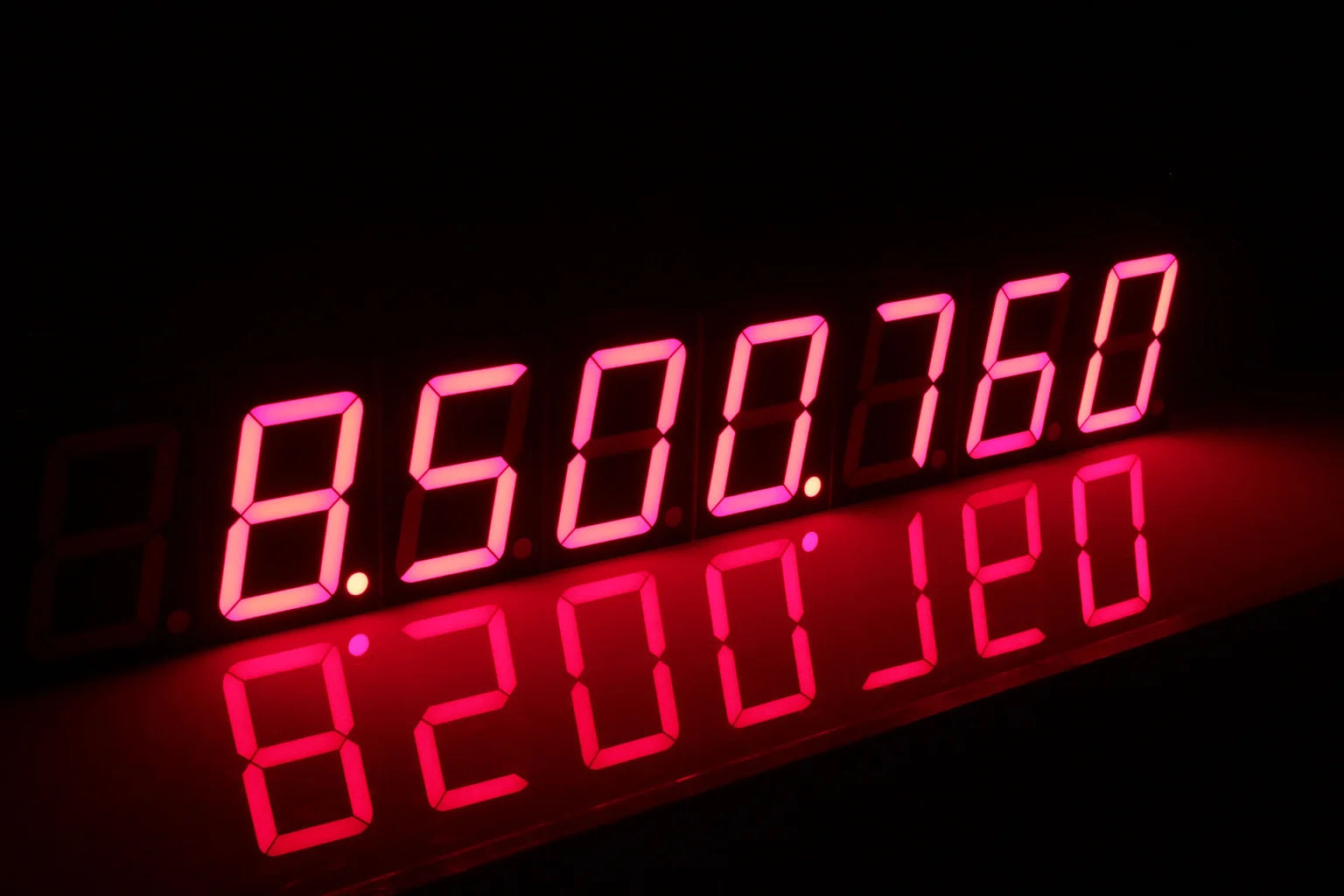

The block diagram use the trigger component to identify particles. When the trigger fires, it commit the data to the LIST. The data is a 32 bit word coming from the TIMESTAMP module. The TIMESTAMP module is a component that counts using a specific board clock, ranging from 50 to 500 MHz. It included Clock Domanin Crossing Logic in order to decuple the counting clock from the interface clock.

The List is configured as 32 bit data size with 1024 word data buffer size.

Readout list data using Resource Explorer

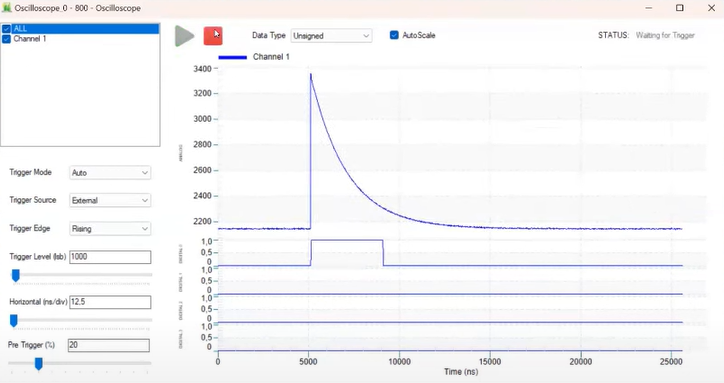

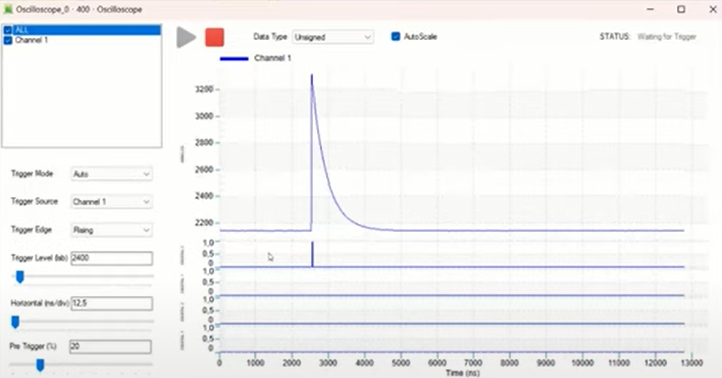

The SCI-Compiler offers the possibility to connect with one of the two supported board types and to explore the features of the FPGA firmware that has been graphically designed by the user, compiled and downloaded to the FPGA with the application software. The Resource Explorer can interface with both the Oscilloscope in the design and the List. The Oscilloscope component is one of the tools that can be used to monitor the signals inside the design. We can use the oscilloscope component to find the correct threshold for the trigger.

Trigger mode allows to select the trigger source and the trigger edge. The trigger source can be an internal analog channel, an external digital signal, or self periodic trigger (to look at the baseline and search for a valid trigger). When internal trigger is selected, the trigger level can be set by looking at the waveform and choosing the right level over the noise.

The Resource Explorer allows to set the decimation factor and the ratio between pre-trigger (number of samples before trigger) and post trigger.

We also need to set the Threshold (THRS) register to about 2400 in order to have a clear trigger pulse

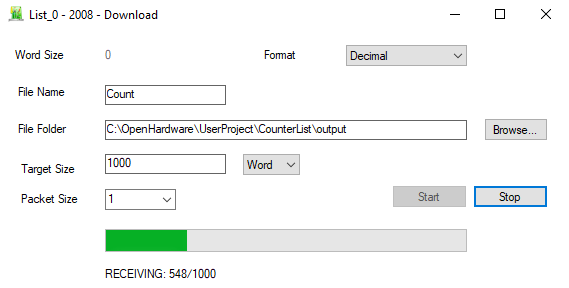

At this point we can open List download tool, specify a folder and a File Name, the number of 32 bit word to be transfer and the size of each single read access to the board. As big is the transfer size as much efficient is the access to the board, and higher speed can be achieved. A too big transfer size will reduce the UI update and the realtime of the processing.

Readout waveform using Sci-SDK

Sci-SDK is a hardware-agnostic library that interfaces with CAEN Open hardware boards developed with Sci-Compiler. It offers flexibility and scalability for data acquisition, readout, and analysis, with a user-friendly interface and comprehensive documentation. The Sci-SDK library offers a hierarchical and hardware-agnostic approach, providing a common interface to manage various elements and seamlessly interface with different hardware boards. It ensures a unified and flexible experience for data acquisition and analysis, regardless of the specific hardware being used.

The Sci-SDK library is available for most common programming languages, including C/C++, Python, and LabVIEW. The library is open-source and available on GitHub. Sci-SDK Information

The following code snippet shows how to use the Sci-SDK library to read the list after configuring the trigger register. The script set the THRS register to 2350 (trigger level) and than monitor the analog signal and digital signal with the oscilloscope. The data are stored in an array and the time difference between events is indeed calculated and plotted with an histogram. It is possible to so the Poisson distribution of the events.

The code snippet is written in python and uses the Sci-SDK python library.

First of all the Sci-SDK library must be installed system wide. Use the Installer in windows or with package manager in Linux. Please be sure to install the Sci-SDK library binary files. The pip package is only a wrapper to the binary files and does not include the libraries files.

Read more about List Driver on GitHub Docs

- Install the Sci-SDK library and matplotlib

pip install matplotlib

pip install scisdk

- Create a new python file and import the Sci-SDK library

Replace the usb:10500 with the PID of your board or

from scisdk.scisdk import SciSDK

import matplotlib.pyplot as plt

from struct import unpack

# initialize Sci-SDK library

sdk = SciSDK()

#DT1260

res = sdk.AddNewDevice("usb:28686","dt1260", "./library/RegisterFile.json","board0")

if res != 0:

print ("Script exit due to connection error")

exit()

res = sdk.SetRegister("board0:/Registers/THRS", 2350)

if res != 0:

print ("Configuration error")

exit()

res = sdk.SetParameterString("board0:/MMCComponents/List_1.thread", "true")

res = sdk.SetParameterInteger("board0:/MMCComponents/List_1.timeout", 500)

res = sdk.SetParameterString("board0:/MMCComponents/List_1.acq_mode", "blocking")

# allocate buffer raw, size 100

res, buf = sdk.AllocateBuffer("board0:/MMCComponents/List_1", 100)

res = sdk.ExecuteCommand("board0:/MMCComponents/List_1.stop", "")

res = sdk.ExecuteCommand("board0:/MMCComponents/List_1.start", "")

array_time = []

while True:

res, buf = sdk.ReadData("board0:/MMCComponents/List_1", buf)

if res == 0:

for i in range(0, int(buf.info.valid_samples/4)):

T = unpack('<L', buf.data[i*4:i*4+4])[0]

array_time.append(T)

print(len(array_time))

if len(array_time) > 100000:

break

time_delta = []

for i in range(1, len(array_time)):

time_delta.append(array_time[i] - array_time[i-1])

plt.hist(time_delta, bins=256)

plt.show()

while True:

pass

The unpack command is used to transform the byte array received from the LIST into an array of Integer Unsigned with 32 bit data.

The syntax '<L' means that we unpack data UINT32 with little endian Endianness

DT1260RegisterFile.json is the register file generated by Sci-Compiler. It is used to enumerate all components presents in the Sci-Compiler design. Double check the path of the file or copy in the same folder of the python script. The file is automatically generated by Sci-Compiler in the folder “library” inside the project folder.

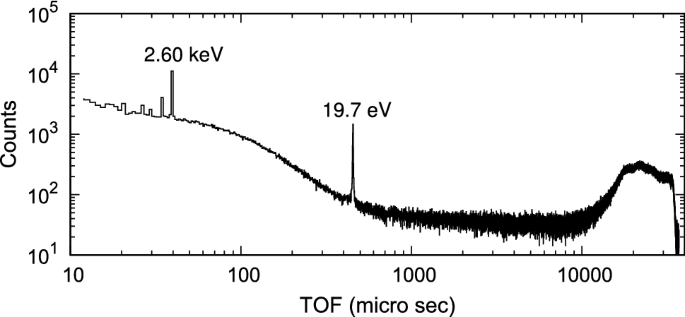

Calculated time distribution of the events

Time distribution of the events collected by the detector has exponential shape because the events are generated belong the Poisson distribution

![Distribution of the T[n]-T[n-1]](../images/010/time-dist.png)

The exponential shape of the time distribution of events collected by a detector in many cases arises from the underlying statistical nature of the event generation process, which follows a Poisson distribution.

The Poisson distribution is a probability distribution that describes the occurrence of rare events in a fixed interval of time or space when the events are independent of each other and the average rate of occurrence is constant. It is commonly used to model random processes where events happen independently and at a constant average rate.

In the context of event detection, the Poisson distribution implies that events occur randomly in time, and the average rate of event occurrence remains constant over a given time interval. Each event is independent of the others, meaning that the timing of one event does not influence the timing of subsequent events.

When events are generated according to a Poisson process, the time intervals between successive events follow an exponential distribution. The exponential distribution is characterized by a constant rate parameter (often denoted as λ), which represents the average number of events occurring per unit time. The probability density function of the exponential distribution is given by:

f(t) = λ * exp(-λt)

where t represents the time elapsed since the previous event.

As a result, the time distribution of events collected by a detector will exhibit an exponential shape if the underlying event generation process follows a Poisson distribution. This means that shorter time intervals between events are more likely than longer ones, with the probability decaying exponentially as the time interval increases.

It’s important to note that the exponential shape of the time distribution may be influenced by other factors as well, such as detector response time or experimental conditions. However, the fundamental statistical nature of the event generation process, characterized by the Poisson distribution, often contributes to the observed exponential shape in many scenarios.